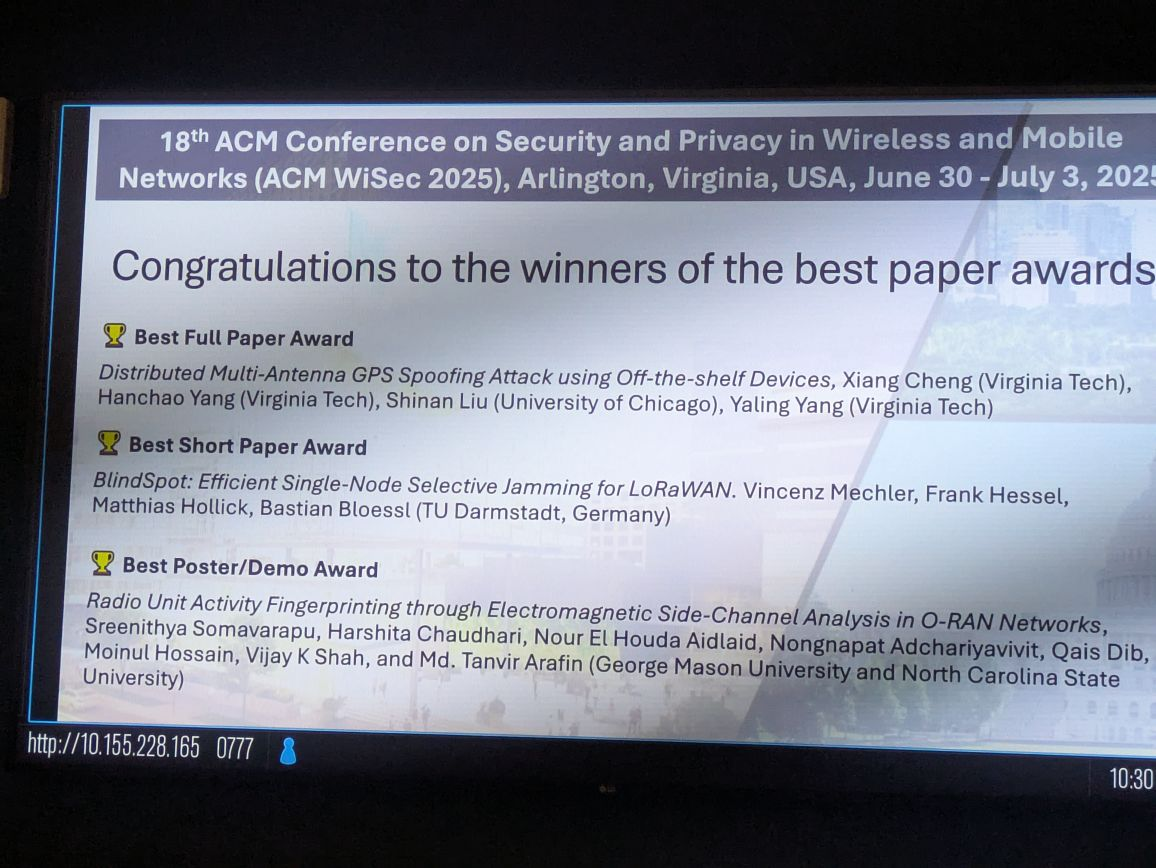

We are happy to receive the Best Short Paper Award @ ACM WiSec’25 for our paper on BlindSpot. Yay!

-

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

-

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “Illuminating the BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Demo Session, Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “Illuminating the BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Demo Session, Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

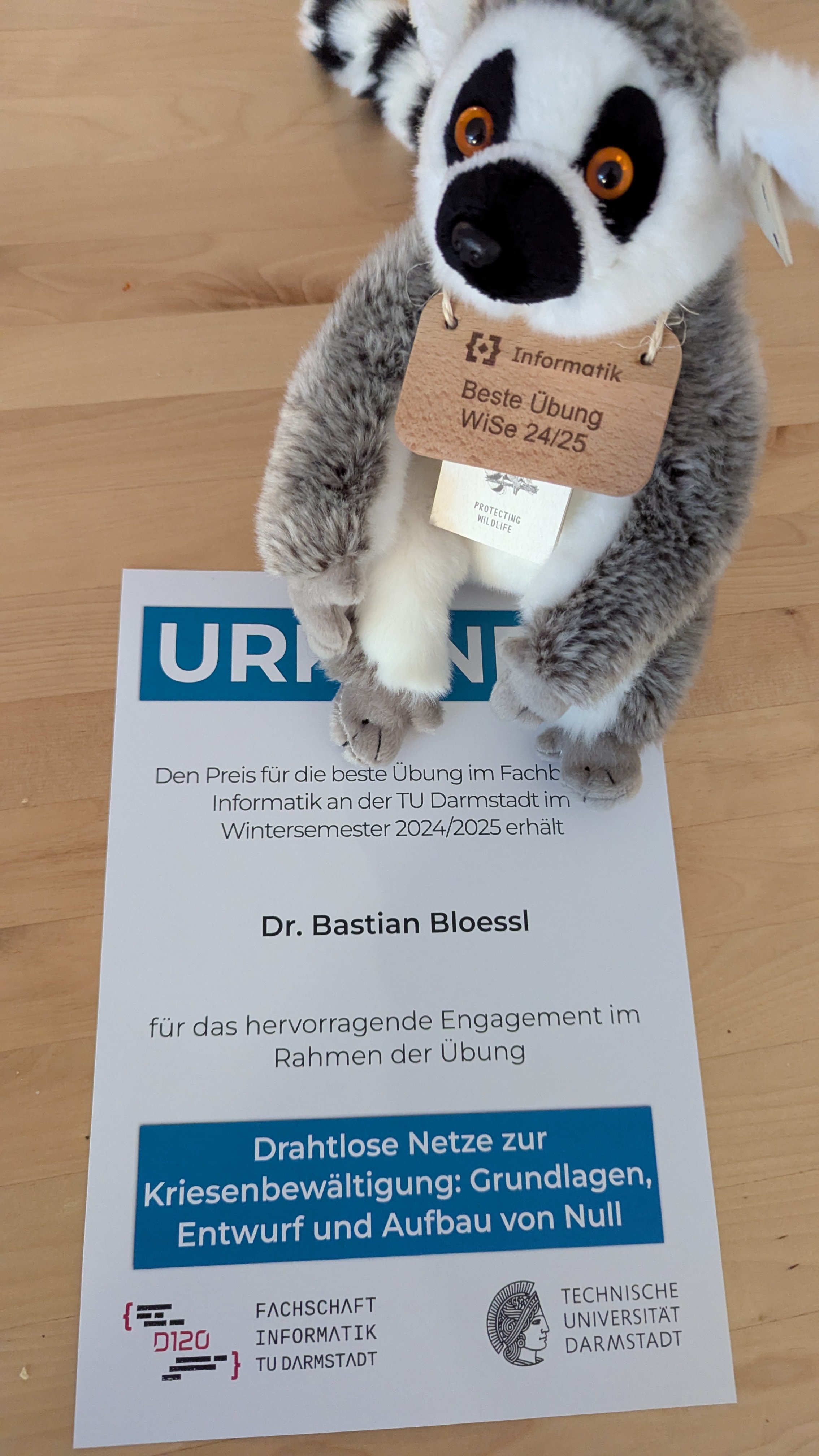

I am happy to receive the award for the best exercise in the winter term 2024/25 from the computer science student council for my lecture on crisis communication.

Thanks to Jakob Link, Malte Klas, Michael Schneider, and Florian Tillmann for the support!

Our FutureSDR + IPEC demo is accepted at ACM MobiCom 2023. Yay!

In the demo, we have the same FutureSDR receiver running on three very different platforms:

- a normal laptop, interfacing an Aaronia Spectran v6 SDR

- a web browser, compiled to WebAssembly and interfacing a HackRF SDR through WebUSB

- an AMD/Xilinx RFSoC ZCU111 evaluation board

On the ZCU111, the same decoder is implemented both in software (using FutureSDR) and in hardware (using IPEC).

Since both implementations have the same structure, we can configure during runtime after which decoding stage to switch from FPGA to CPU processing.

With regard to FutureSDR, this highlights two important features:

- We show the portability of FutureSDR, having the same receiver running on three very different platforms.

- We show that the software implementation is capable of offloading different parts of the decoding dynamically during runtime.

Please checkout the paper for further information or visit our booth at the conference.

-

David Volz, Andreas Koch and Bastian Bloessl, “Software-Defined Wireless Communication Systems for Heterogeneous Architectures,” Proceedings of 29th Annual International Conference on Mobile Computing and Networking (MobiCom 2023), Demo Session, Madrid, Spain, October 2023.

[DOI, BibTeX, PDF and Details…]

David Volz, Andreas Koch and Bastian Bloessl, “Software-Defined Wireless Communication Systems for Heterogeneous Architectures,” Proceedings of 29th Annual International Conference on Mobile Computing and Networking (MobiCom 2023), Demo Session, Madrid, Spain, October 2023.

[DOI, BibTeX, PDF and Details…]

With all the cool Software Defined Radio WebAssembly projects popping up, it seems like 2023 is the year of SDR in the browser :-)

I recently worked on improving the WebAssembly support for FutureSDR and am pretty happy with the result.

It no longer requires pulling a lot of tricks to cross-compile the native driver with emscripten but enables a complete Rust workflow.

The current user experience is shown in a tutorial-style live coding video, where I port a native FutureSDR ZigBee receiver to WebAssembly.

David Volz and I presented our demo FutureSDR meets IPEC at the Berlin 6G Conference, a meeting of all 6G-related projects, funded by the Federal Ministry of Education and Research (BMBF).

Our demo showed how FutureSDR can be used to implement platform-independent real-time signal processing applications that can be reconfigured during runtime.

We had the same FutureSDR receiver running on a Xilinx RFSoC FPGA board, a normal laptop with an Aaronia Spectran V6 SDR, an in the web, using a HackRF.

We, furthermore, had the same receiver implemented on the FPGA of the RFSoC, using David’s IPEC framework for Inter-Processing Element Communication.

Since the FPGA and the CPU implementations had the same structure, we could dynamically decide where to make the cut between FPGA and CPU processing, which was reflected in the CPU load of the RFSoC’s ARM processor.

I gave a talk about Seify at the Software Defined Radio Academy 2023.

Seify is FutureSDR’s Rust SDR hardware abstraction library that solves a similar problem to Soapy in C++ domain.

The preliminary talk is already on YouTube. A final version, including the live Q&A, will be available later.

The slides are also available:

I’m happy to be back at Paderborn University as Substitute Professor of the Computer Networks group.

I’ll be mainly responsible for teaching the Systems Software and Systems Programming lecture, including exercises and labs, which is a mandatory module in the Computer Science Bachelor curriculum.

Looking forward to this new experience.

Together with Lars Baumgärtner, I co-organized a workshop on Next Generation Wireless Edge Networks, which is part of a workshop series, organized in the context of our Collaborative Research Center MAKI.

I’m thankful for the awesome speakers that joined us and the interesting discussions at the event.

We also had quite some fun at the demo session :-)

read more

I’ll be giving a tutorial on FutureSDR at NetSys 2023, which will take place between 4 – 8 September 2023 at the Hasso-Plattner-Institut in Potsdam, Germany.

NetSys, the conference on Networked Systems, is organized by the special interest group Communication and Distributed Systems (KUVS), which is anchored both in the German Computer Science society (Gesellschaft für Informatik (GI)) and in the Information Technology society (Informationstechnische Gesellschaft im VDE (ITG)).

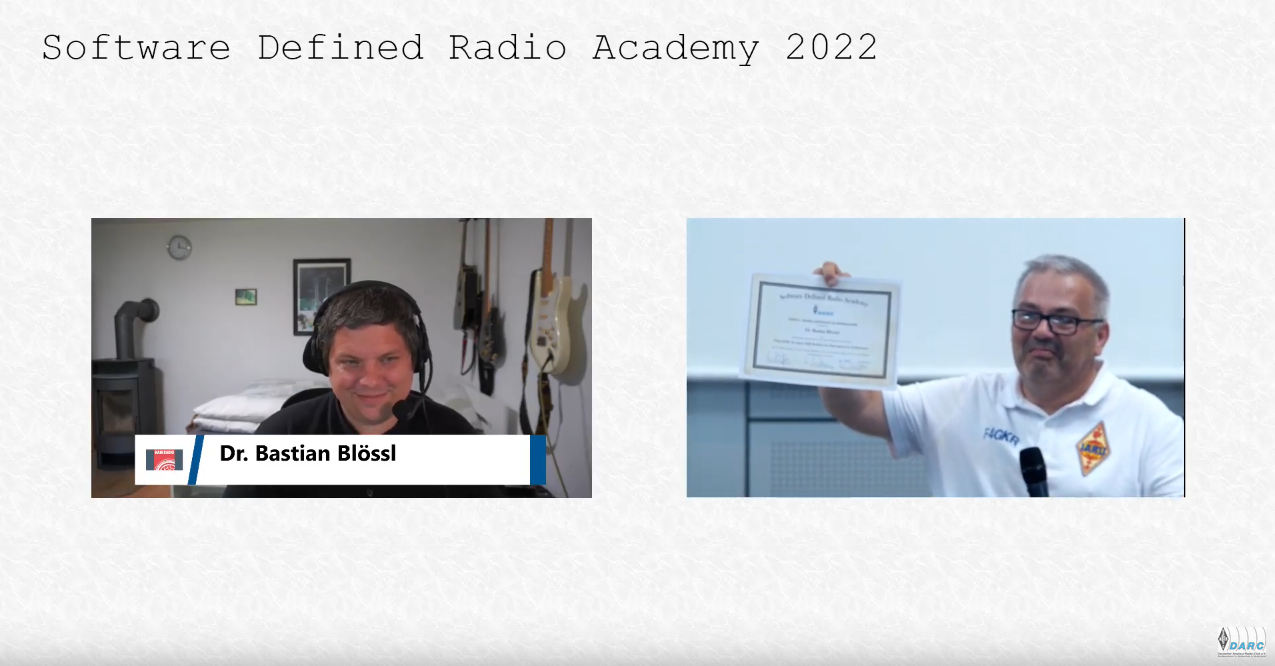

I’m happy to receive the Ulrich L. Rohde Award for outstanding contributions in the field of Software Defined Radio for my work on FutureSDR.

The award was handed-over at the Software Defined Radio Academy, presented by IARU R1 President Sylvain Azarian and the DARC.

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…] Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “Illuminating the BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Demo Session, Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]

Vincenz Mechler, Frank Hessel, Matthias Hollick and Bastian Bloessl, “Illuminating the BlindSpot: Efficient Single-Node Selective Jamming for LoRaWAN,” Proceedings of 18th ACM Conference on Security and Privacy in Wireless and Mobile Networks (WiSec 2025), Demo Session, Arlington, VA, June 2025.

[DOI, BibTeX, PDF and Details…]