FutureSDR Released!

I released my Rust SDR experiments as a project that I call FutureSDR and prepared a video to introduce its main features.

The code is available on GitHub.

I released my Rust SDR experiments as a project that I call FutureSDR and prepared a video to introduce its main features.

The code is available on GitHub.

I was happy to give a tutorial about Programming Software Defined Radios with GNU Radio at ACM WiSec 2021. This was a lot of fun to prepare but also a good excuse to spent a lot of money and get some more professional A/V equipment :-)

I also uploaded the IQ file that was recorded in the session and the GNU Radio module.

Our subproject of the Collaborative Research Center MAKI got funded :-)

It’s the first time I can hire a PhD student. So I’m looking for motivated candidates, who want to build fancy SDR prototypes and explore new concepts for SDR runtimes.

If you’re interested, drop me an email.

Yay, our subproject made it! 🍻 https://t.co/oi0FsPtOdS

— Bastian Bloessl (@bastibl) November 30, 2020

Josh Morman and I gave an update on our current efforts to build a new more modular runtime for GNU Radio that comes with pluggable schedulers and native support for accelerators.

If you’re interested in the topic

# A modular future for GNU Radio

— GNU Radio Project (@gnuradio) February 7, 2021

Josh Morman and @bastibl are showing the newsched experimental runtime for GNU Radio targeting more efficient and easy running on heterogeneous platforms.

Livestream and chat:https://t.co/fTz5F83qYT

Slides and Video:https://t.co/1HyR8wk9q3 pic.twitter.com/NRD30PoDaU

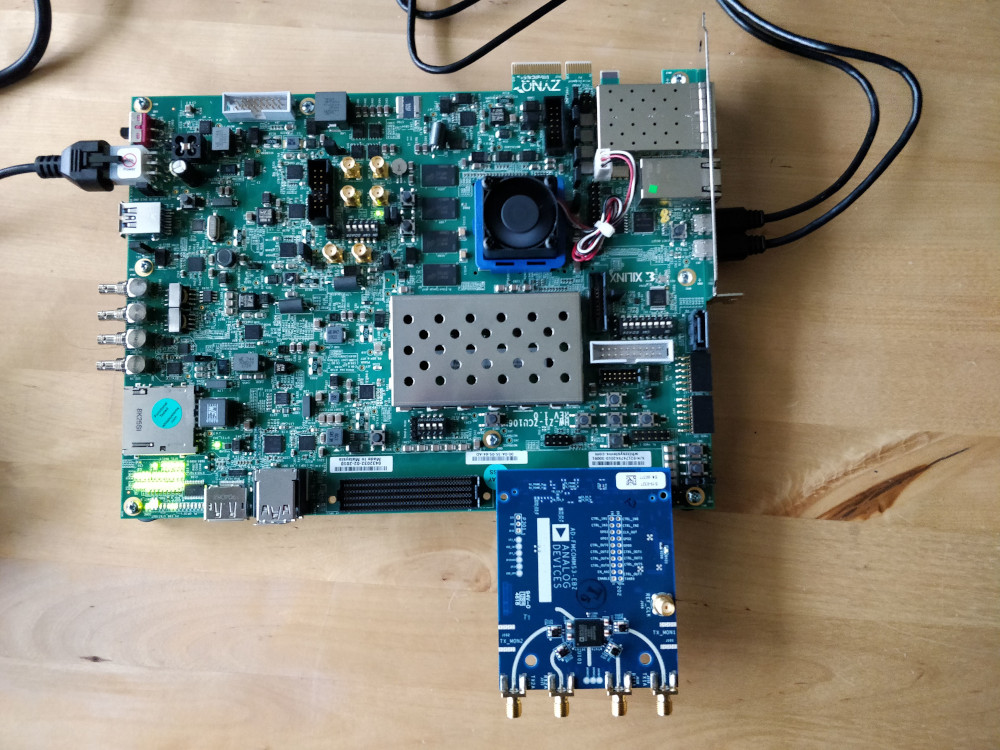

This is the second post on FutureSDR, an experimental async SDR runtime, implemented in Rust. While the previous post gave a high-level overview of the core concepts, this and the following posts will be about more specific topics. Since I just finished integration of AXI DMA custom buffers for FPGA acceleration, we’ll discuss this.

...and custom buffers for FPGA acceleration (Xilinx AXI DMA). Both blocking and async mode :-) https://t.co/1vmxNMwiuv pic.twitter.com/tms5IzdhdX

— Bastian Bloessl (@bastibl) January 27, 2021

The goal of the post is threefold:

The actual example is simple. We’ll use the FPGA to add 123 to an 32-bit integer. The data will be DMA’ed to the FPGA, which will stream the integers through the adder and copy the data back to CPU memory. It’s only slightly more interesting than a loopback example, but the actual FPGA implementation is, of course, not the point. There are drop-in replacements for FIR filters, FFTs, and similar DSP cores.

I only have a Xilinx ZCU106 board with a Zynq UltraScale+ MPSoC. But the example doesn’t use any fancy features and should work on any Zynq platform.

In the last year, I’ve been thinking about how GNU Radio and SDR runtimes in general could improve. I had some ideas in mind that I wanted to test with quick prototypes. But C++ and rapid prototyping don’t really go together, so I looked into Rust and created a minimalistic SDR runtime from scratch. Even though I’m still very bad at Rust, it was a lot of fun to implement.

One of the core ideas for the runtime was to experiment with async programming, which Rust supports through Futures.

Hence the name FutureSDR.

(Other languages might call similar constructs promises or co-routines.)

The runtime uses futures and it is a future, in the sense that it’s a placeholder for something that is not ready yet :-)

FutureSDR is minimalistic and has far less features than GNU Radio. But, at the same time, it’s only ~5100 lines of code and served me well to quickly test ideas. I, furthermore, just implemented basic accelerator support and finally have the feeling that the current base design makes some sense.

Awww, I got some Vulkan custom buffers working for my Rust SDR runtime thing :-) I was experimenting with quite a lot of different APIs until I found something that somehow made sense to me. pic.twitter.com/iOcMNM5EII

— Bastian Bloessl (@bastibl) January 18, 2021

Overall, I think it might have reached a state where it might be useful to experiment with SDR runtimes. So this post gives a bit of an overview and introduces the main ideas. In follow-up posts, I want to go in more detail on specific features and have an associated git commit introducing this feature.

Note that the code examples might not compile exactly as-is (e.g. I leave some irrelevant function parameters out).

Also there is, at the moment, no proper error handling (i.e., unwrap() all over the place).

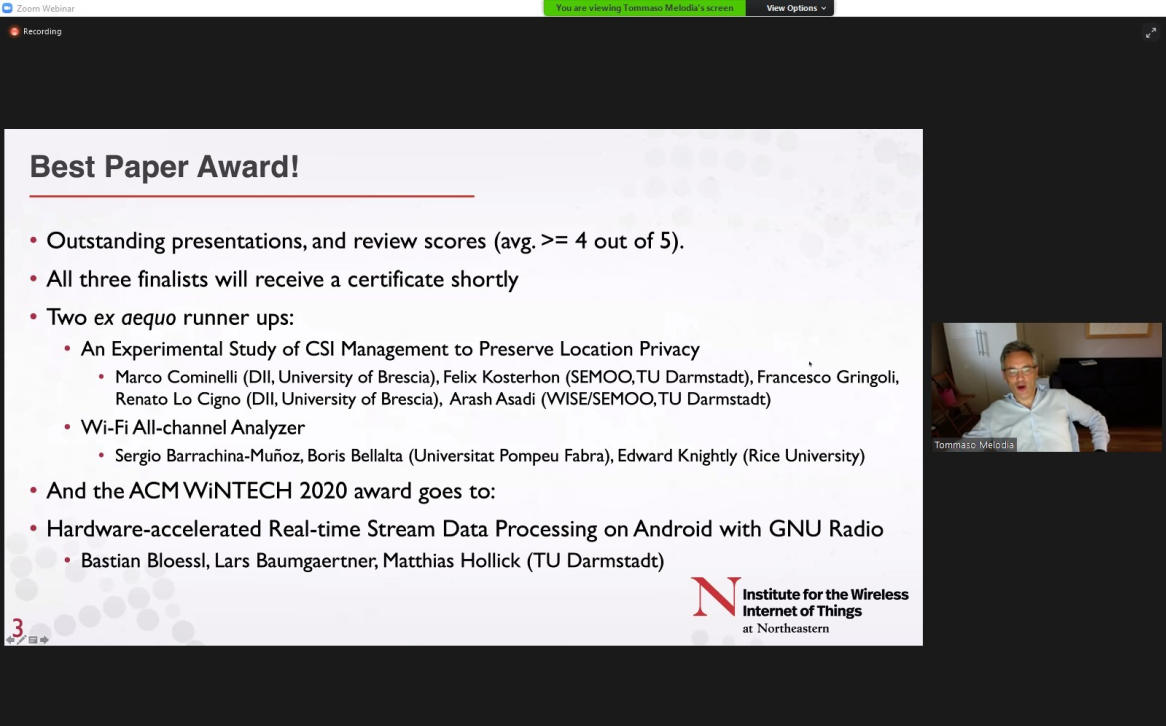

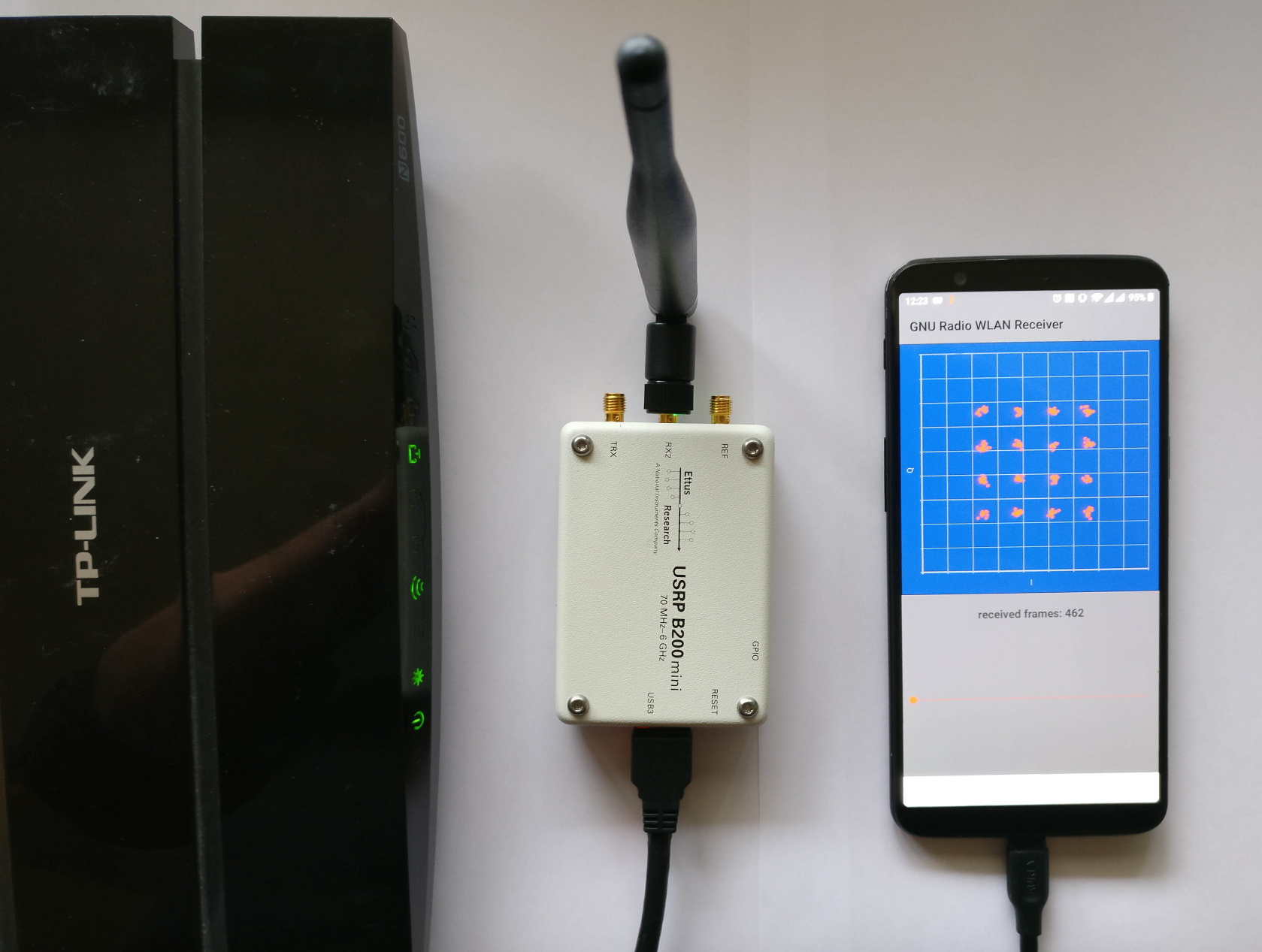

I’m happy to receive the Best Paper Award of ACM WiNTECH 2020 – a workshop on wireless testbeds that is held in conjunction with ACM MobiCom – for my paper about GNU Radio on Android.

I’m particularly happy, because I think it’s really great that this type of paper is appreciated at an academic venue 🍾 🍾 🍾

![]() Bastian Bloessl, Lars Baumgärtner and Matthias Hollick, “Hardware-Accelerated Real-Time Stream Data Processing on Android with GNU Radio,” Proceedings of 14th International Workshop on Wireless Network Testbeds, Experimental evaluation and Characterization (WiNTECH’20), London, UK, September 2020.

[DOI, BibTeX, PDF and Details…]

Bastian Bloessl, Lars Baumgärtner and Matthias Hollick, “Hardware-Accelerated Real-Time Stream Data Processing on Android with GNU Radio,” Proceedings of 14th International Workshop on Wireless Network Testbeds, Experimental evaluation and Characterization (WiNTECH’20), London, UK, September 2020.

[DOI, BibTeX, PDF and Details…]

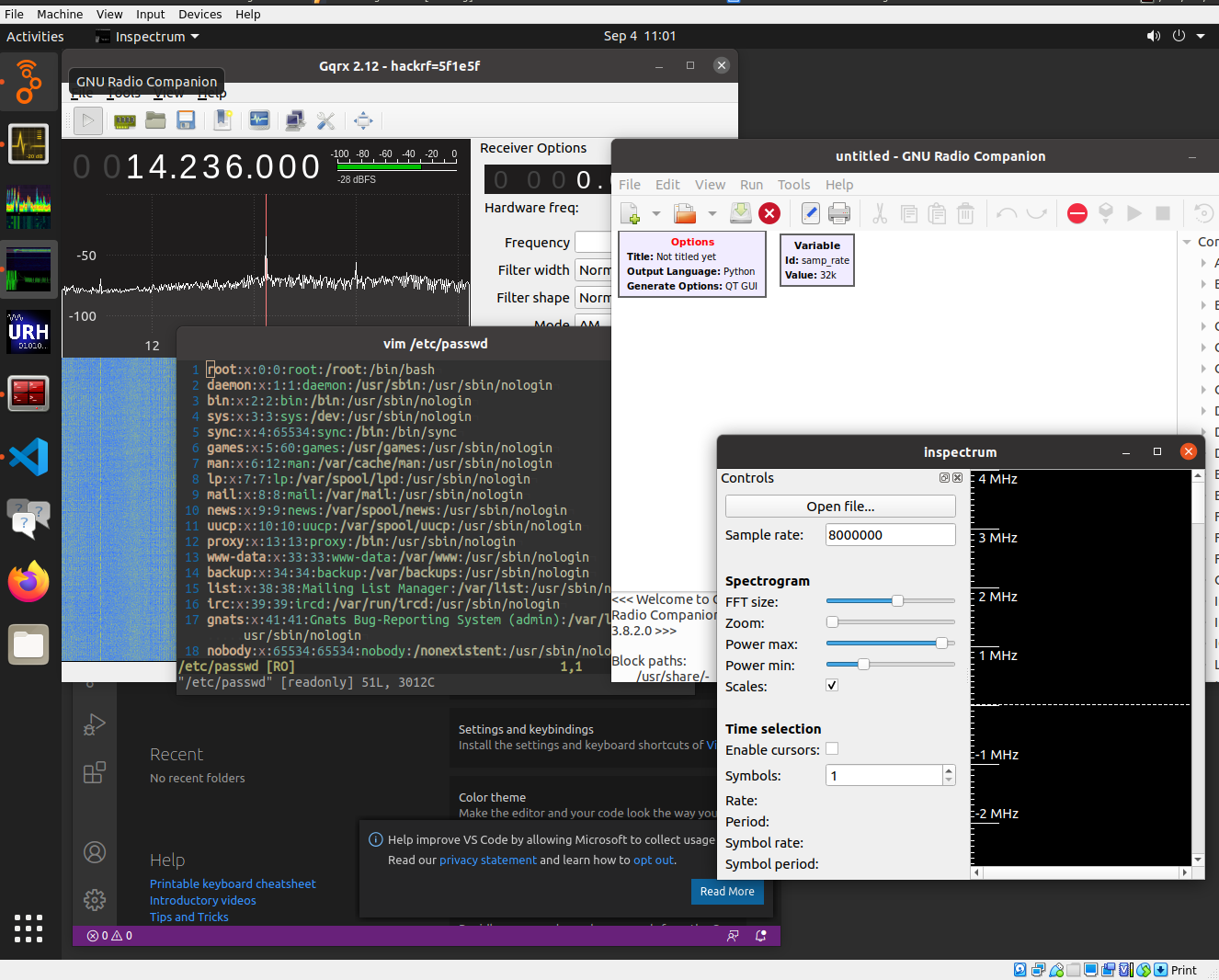

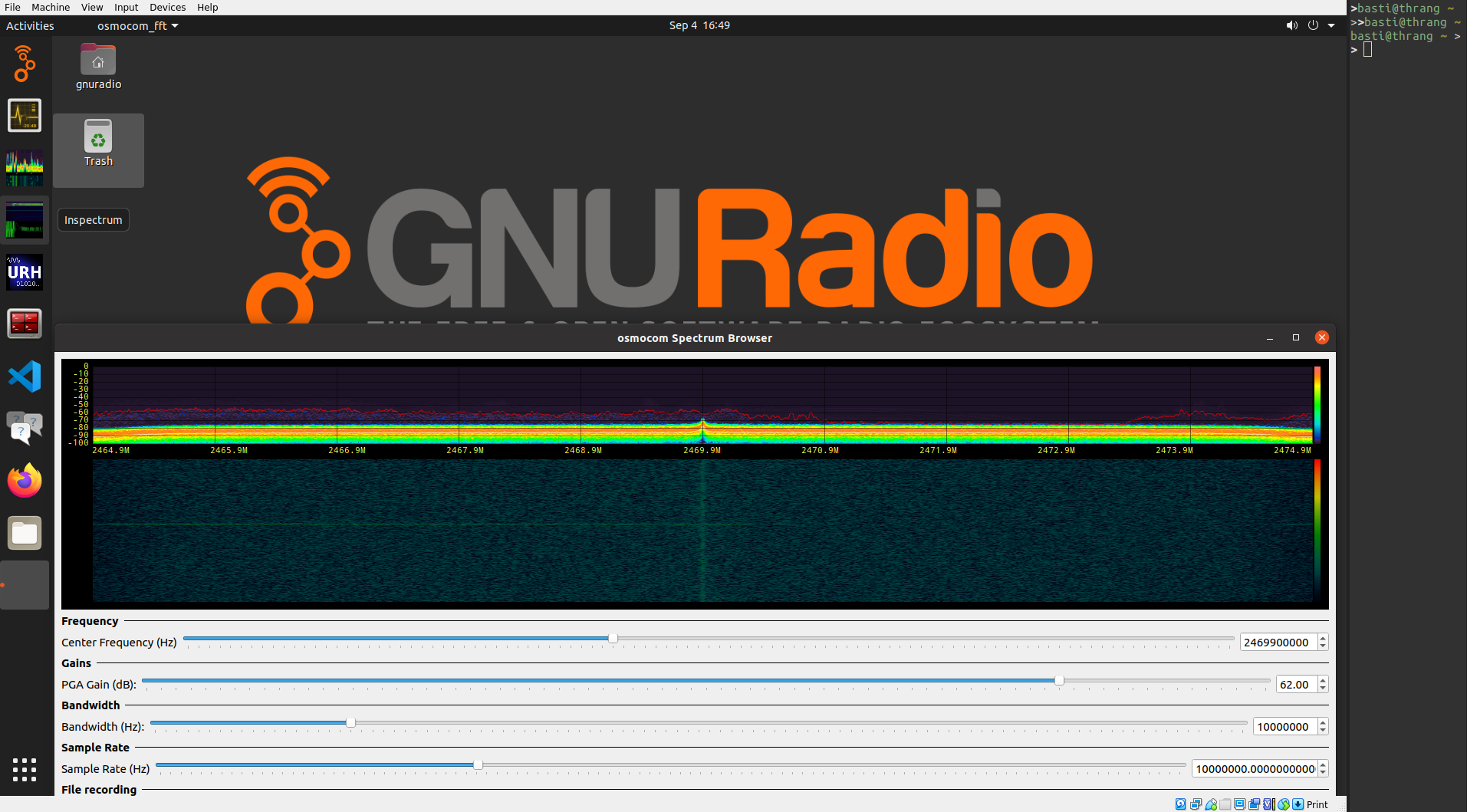

I recently updated instant-gnuradio, a project that uses Packer to create a VM with GNU Radio and many SDR-related applications pre-installed. I wanted to do that for quite a while, but GRCon20 was a good occasion, since we wanted to provide an easy to use environment to get started with SDR anyway.

I didn’t maintain the project in the last year, not even porting it to GNU Radio 3.8. This was mainly since the build relied on PyBombs, which was not maintained and broke every other day. (This is about to change btw, since we have two new very active maintainers. Yay!). But recently distribution packaging has improved quite a lot. The new build uses Josh’s PPAs, which provide recent GNU Radio versions. With this, the build is faster, simpler, and hopefully also more stable.

I also updated to Ubuntu 20.04 and added VS Code. Like the previous version it comes with Intel OpenCL preinstalled, supporting Fosphor out of the box.

The biggest advantage of the Packer build is that it is easy to customize the VM for workshops or courses (e.g., change branding like the background image, add a link to the course website in the favorites bar, install additional software, etc). A good example might be the VM, I built for Daniel Estévez’s GRCon workshop on satellite communications.

Some months ago, I looked into Tom Rondeau’s work on running GNU Radio on Android. It took me quite some time to understand what was going, but I finally managed to put all pieces together and have GNU Radio running on my phone. A state-of-the-art real-time stream-data processing system and a comprehensive library of high-quality DSP blocks on my phone – that’s pretty cool. And once the toolchain is set up, it’s really easy to build SDR apps.

I put quite some work into the project, updated everything to the most recent version and added various new features and example applications. Here are some highlights of the current version:

armeabi-v7a and arm64-v8a).I think this is pretty complete. To demonstrate that it possible to run non-trivial projects on a phone, I added an Android version of my GNU Radio WLAN transceiver. If it runs in real-time depends, of course, on the processor, the bandwidth, etc.

The whole project is rather complex. To make it more accessible and hopefully more maintainable, I

created a git repository with build scripts for the toolchain. It includes GNU Radio and all its dependencies as submodules pointing (if necessary) to patched versions of the library.

created a Dockerfile that can be used to setup a complete development environment in a container. It includes the toolchain, Android Studio, the required Android SDKs, and example applications.

The project is now available on GitHub.

It was a major effort to put everything together, cleanup the code, and add some documentation. There might be rough edges, but I’m happy to help and look forward to feedback.

If you’re using the GNU Radio Android toolchain in your work, I’d appreciate a reference to:

![]() Bastian Bloessl, Lars Baumgärtner and Matthias Hollick, “Hardware-Accelerated Real-Time Stream Data Processing on Android with GNU Radio,” Proceedings of 14th International Workshop on Wireless Network Testbeds, Experimental evaluation and Characterization (WiNTECH’20), London, UK, September 2020.

[DOI, BibTeX, PDF and Details…]

Bastian Bloessl, Lars Baumgärtner and Matthias Hollick, “Hardware-Accelerated Real-Time Stream Data Processing on Android with GNU Radio,” Proceedings of 14th International Workshop on Wireless Network Testbeds, Experimental evaluation and Characterization (WiNTECH’20), London, UK, September 2020.

[DOI, BibTeX, PDF and Details…]

In the last post, we setup a environment to conduct stable and reproducible performance measurements. This time, we’ll do some first benchmarks. (A more verbose description of the experiments is available in [1]. The code and evaluation scripts of the measurements are on GitHub.)

The simplest and most accurate thing we can do is to measure the run time of a flowgraph when processing a given workload. It is the most accurate measure, since we do not have to modify the flowgraph or GNU Radio to introduce measurement probes.

The problem with benchmarking a real-time signal processing system is that monitoring inevitably changes the system and, therefore, its behavior. It’s like the system behaves differently when you look at it. This is not an academic problem. Just recording a timestamp can introduce considerable overhead and change scheduling behavior.

In the first experiment, we consider flowgraph topologies like this.

They allow to scale the number of pipes (i.e., parallel streams) and stages (i.e., blocks per pipe). All flowgraphs are created programmatically with a C++ application to avoid any possible impact from Python bindings. My system is based on Ubuntu 19.04 and runs GNU Radio 3.8, which was compiled with GCC 8.3 in release mode.

GNU Radio supports two connection types between block: a message passing interface and a ring buffer-based interface.

We start with the message passing interface. Since we want to focus on the scheduler and not on DSP performance, we use PDU Filter blocks, which just forward messages through the flowgraph. Our messages, of course, do not contain the key that would be filtered out. (Update: And this is, of course, where I messed up in the initial version of the post.) I, therefore, created a custom block that just forwards messages through the flowgraph and added a debug switch to make sure that this doesn’t happen again.

Before starting the flowgraph, we create and enqueue a given number of messages in the first block.

Finally, we also enqueue a done message, which signals the scheduler to shutdown the block.

We used 500 Byte blobs, but since messages are forwarded through pointers, the type of the message does not have a sizeable impact.

The run time of top_block::run() looks like this:

Note that we scaled the number of pipes and stages jointly, i.e., an x-axis value of 100 corresponds to 10 pipes and 10 stages. The error bars indicate confidence intervals of the mean for a confidence level of 95%.

The good news are: GNU Radio scales rather well, even for a large number of threads. In this case, up to 400 threads on 4 CPU cores. While it is worse than linear, we were not able to reproduce the horrendous results presented at FOSDEM. These measurements were probably conducted with an earlier version of GNU Radio, containing a bug that caused several message passing blocks to busy-wait. With this bug, it is reasonable that performance collapsed, once the number of blocks exceeds the number of CPUs. We will investigate it further in the following posts.

We, furthermore, can see the that difference between normal and real-time priority is only marginal.

Not so for the buffer interface. Here, we used a Null Source followed by a Head block to pipe 100e6 4-byte floats into the flowgraph. To focus on the scheduler and not on DSP, we used Copy blocks in the pipes, which just copy the samples from the input to the output buffer. Each pipe was, furthermore, terminated by a Null Sink.

Like in the previous experiment, we scale the number of pipes and stages jointly and measure the run time of top_block::run().

Again, good news: Also the performance of the buffer interfaces scales linearly with the number of blocks. (Even though we will later see that there is quite some overhead from thread synchronization.)

What’s not visible from the plot is that even with the CPU governor performance, the CPU might reduce the frequency due to heat issues. So while the confidence intervals indicate that we have a good estimate of the mean, the underlying individual measurements were bimodal (or multi-modal), depending on the CPU frequency.

What might be surprising is that real-time priority performs so much better. For 100 blocks (10 pipes and 10 stages), it is about 50% faster. Remember, we created a dedicated CPU set for the measurements to avoid any interference from the operating system. So there are no priority issues.

To understand what’s going on, we have to look into the Linux process scheduler. But that’s topic for a later post.

Bastian Bloessl, Marcus Müller and Matthias Hollick, “Benchmarking and Profiling the GNU Radio Scheduler,” Proceedings of 9th GNU Radio Conference (GRCon 2019), Huntsville, AL, September 2019. [BibTeX, PDF and Details…]